Product:

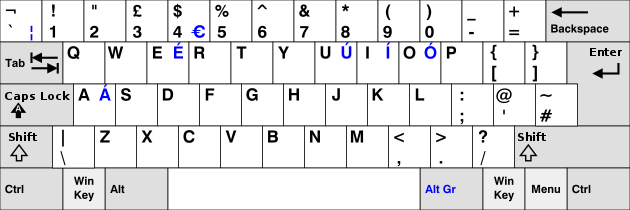

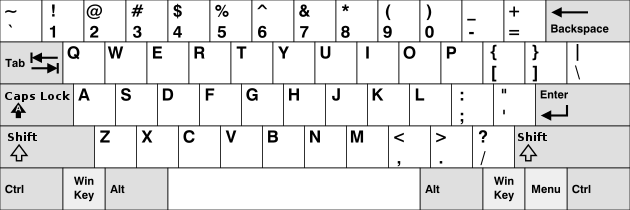

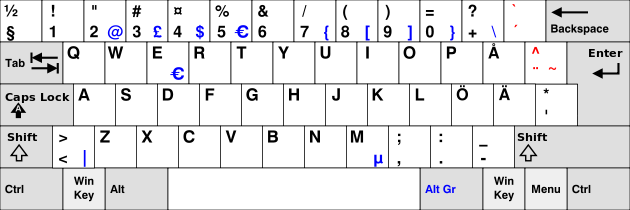

Planning Analytics 2.0.9.3

Microsoft Windows 2019 server

Issue:

You have create a TM1 WEBSHEET in excel (Tm1 perspective) with a ACTION BUTTON that run a process.

This works fine in TM1WEB, but not in TM1 APP WEB (old contributor). When you in the web page (inside contributor session) click on the button icon, nothing happens.

Solution:

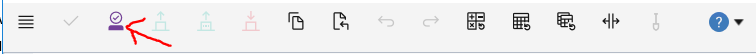

To be able to click on websheet buttons in Tm1 Application Web, you need to first take ownership.

Click on the icon take ownership.

Then the buttons in the websheet will work in the Tm1 App Web (contributor session).

When you are done, you need to relase the owner ship, so other can access that company node.

More information:

https://www.ibm.com/docs/en/cognos-tm1/10.2.2?topic=applications-ownership-bouncing-releasing

Data that you can edit has a white background. Read-only data has a gray background. If you are not the current owner, the data opens in a read-only view. To start adding or editing data or click on button, click Take Ownership  .

.

You can edit data only if it has a workflow state of Available  or Reserved

or Reserved  . The icons indicate the workflow state.

. The icons indicate the workflow state.

Ownership availability for a particular node can be changed depending on how the parent node is opened. For example, contributors and reviewers who open the parent node in IBM® Cognos® Insight are not able to take ownership of the node. See the TM1® Performance Modeler documentation and the Cognos Insight documentation for details on ownership and nodes.

After taking ownership, use the Release ![]() icon to release the data so other people can use it . In Cognos TM1 Application Web, you must submit all nodes at the level at which you take ownership and you can only release ownership at the level you have taken ownership.

icon to release the data so other people can use it . In Cognos TM1 Application Web, you must submit all nodes at the level at which you take ownership and you can only release ownership at the level you have taken ownership.

https://www.ibm.com/docs/en/planning-analytics/2.0.0?topic=applications-working-data

You can insert an Action button into a worksheet so users can run a TurboIntegrator process and/or navigate to another worksheet. Users can access these buttons when working with worksheets in Microsoft Excel with TM1, or with Websheets in TM1 Web.

The action buttons will work in TM1 application Web, too, even not stated in the IBM documentation.

https://www.ibm.com/docs/en/planning-analytics/2.0.0?topic=excel-action-buttons

https://www.ibm.com/docs/en/SSD29G_2.0.0/com.ibm.swg.ba.cognos.tm1_inst.2.0.0.doc/tm1_inst.pdf