Product:

Cognos Analytics 11.1.x

Microsoft Windows 2019 server

Microsoft SQL server

Problem:

I have a new Cognos environment, and want to easy copy the content store from the old environment to the new. The new Cognos environment have the same or newer version of Cognos Analytics.

Solution:

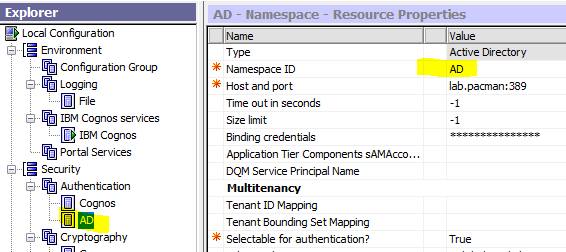

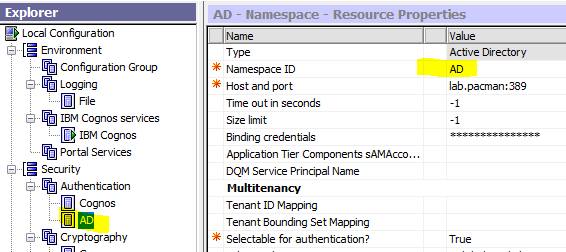

To get security values over – you must have exact the same Active Directory connection setup on both the old and new environment. Double check in Cognos Configuration that namespace is the same.

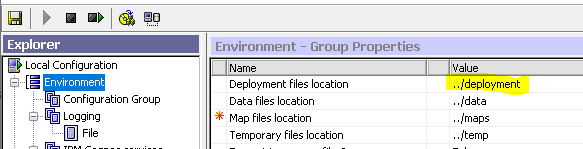

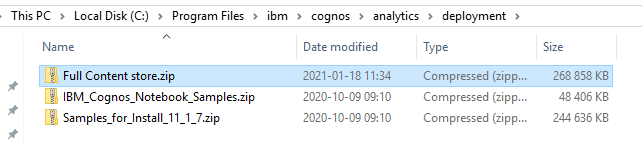

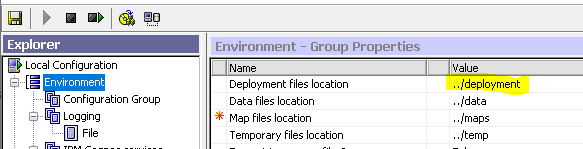

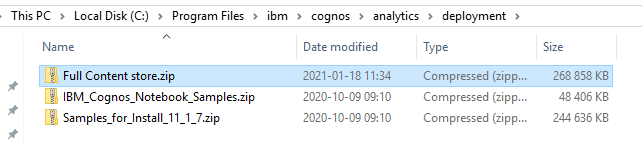

On the old server – check in Cognos Configuration where the zip file is stored.

This is normally in folder C:\Program Files\ibm\cognos\analytics\deployment on your CA11 server.

Browse to ..ibmcognos from your web browser. Login as adminstrator in cognos connection.

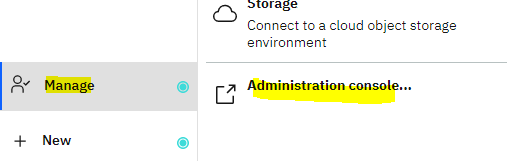

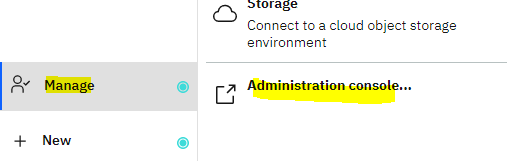

Click Manage – Administration console

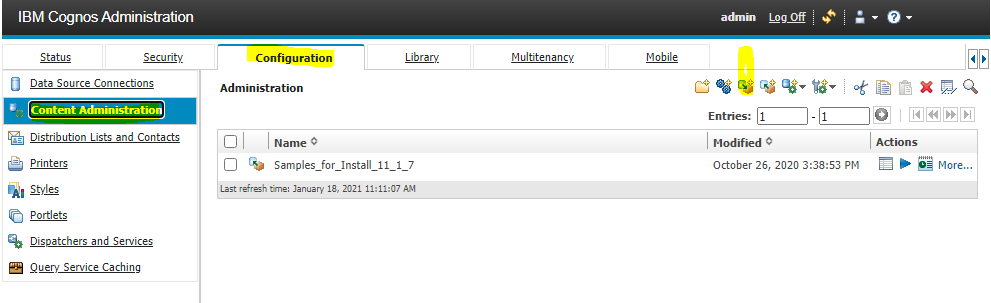

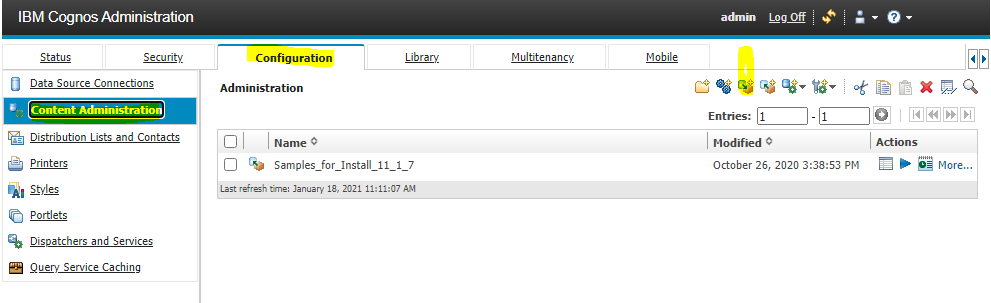

Click Configuration tab

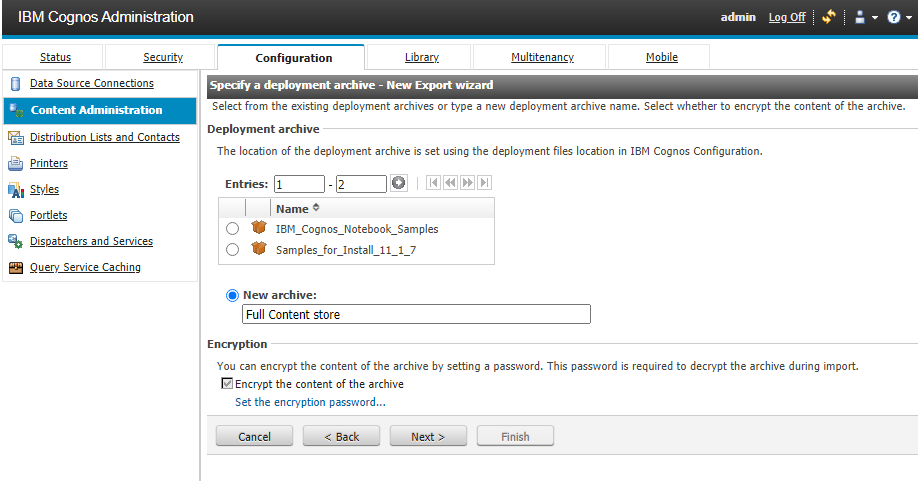

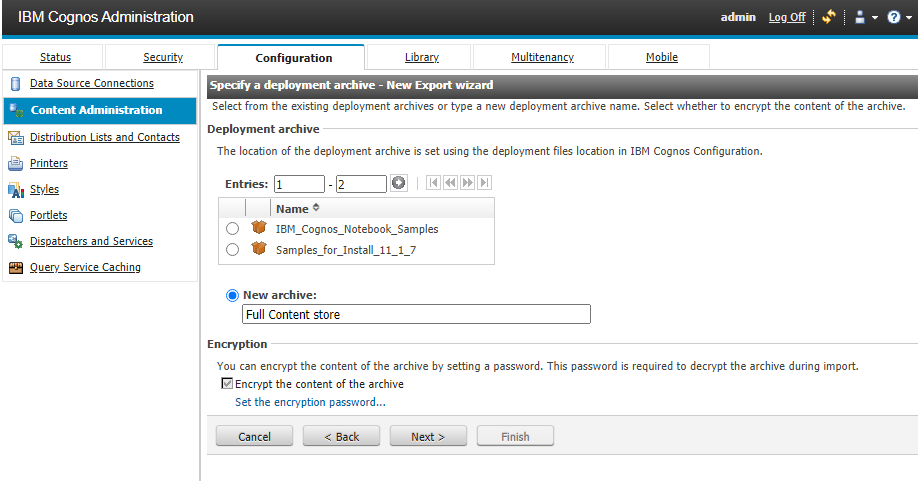

Click Content Administration and click on the export icon

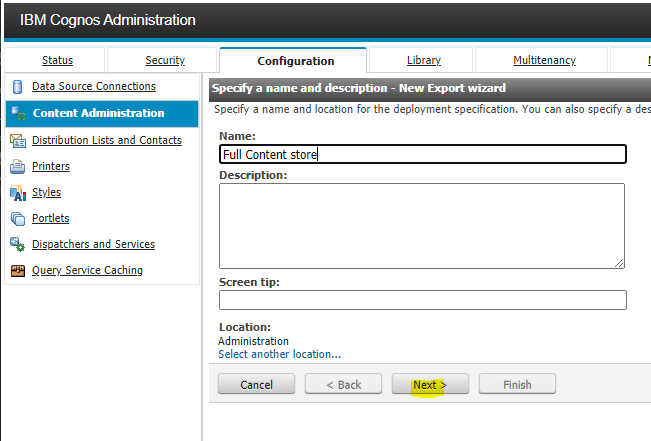

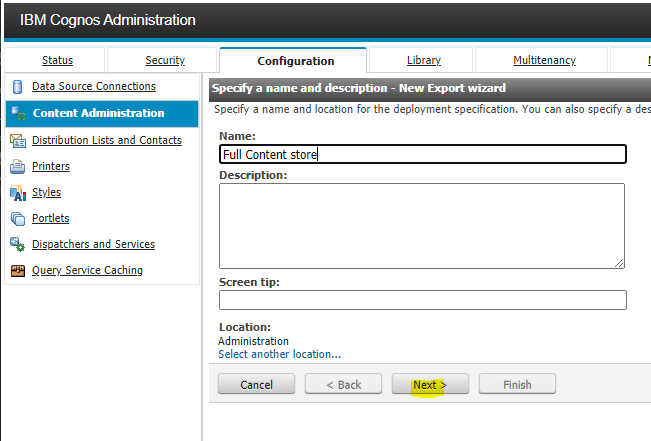

Enter a name and click on Next button

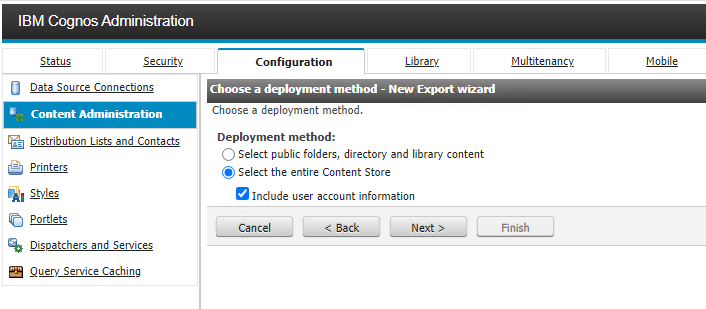

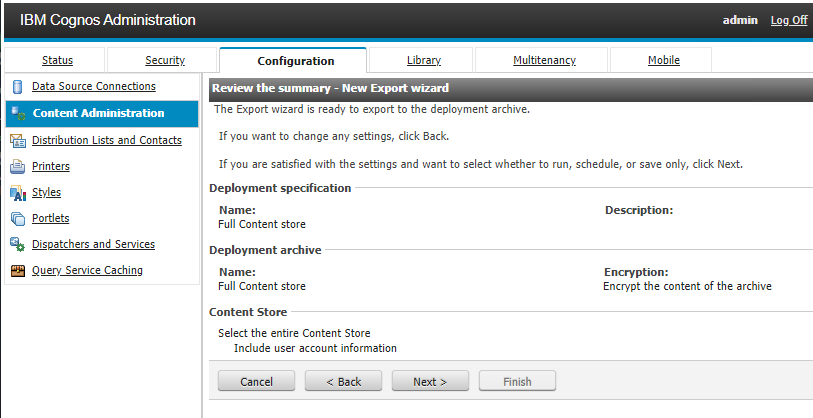

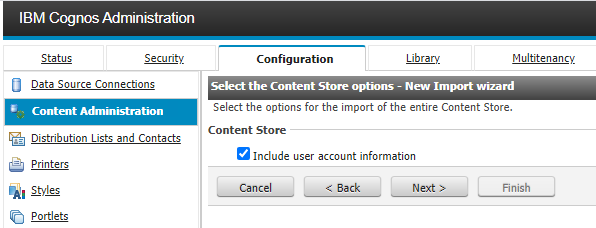

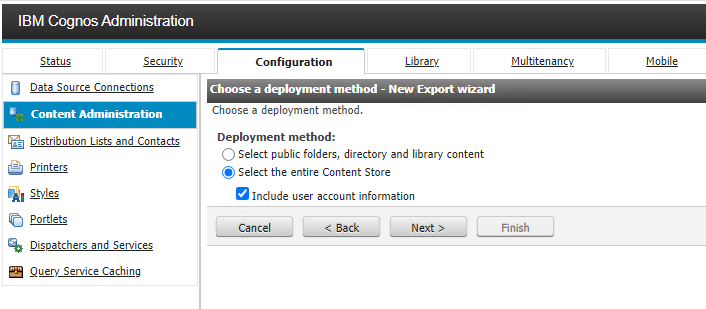

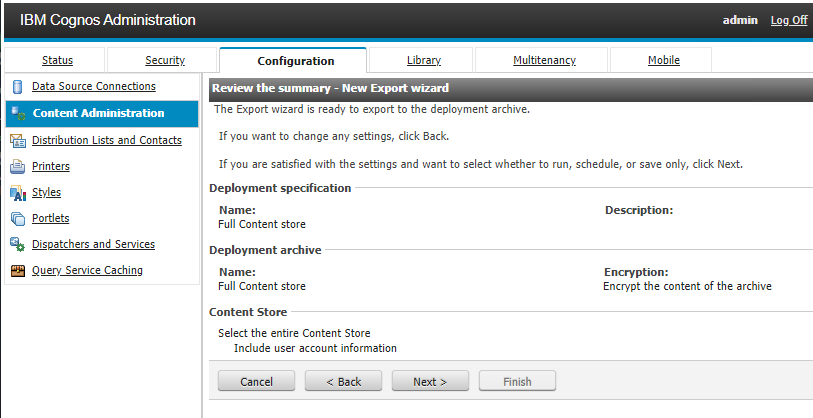

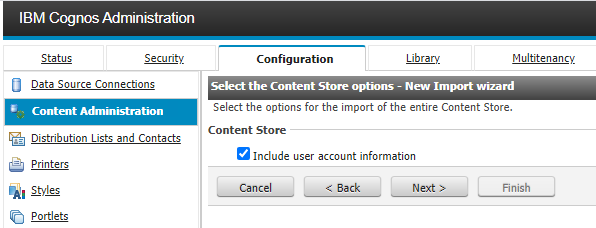

Mark “Select the entire Content Store” and check “Include user account information” to get most information over. Click Next

Click Next

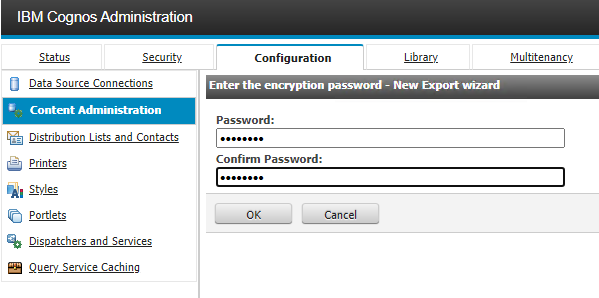

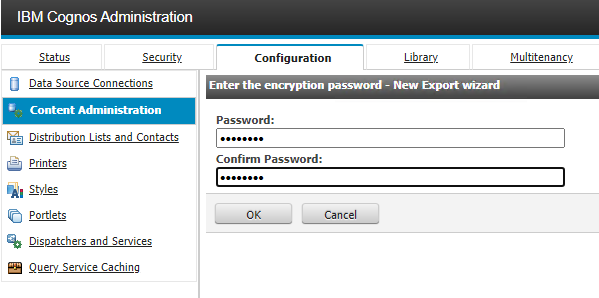

Enter a password you can remember and click OK

Click Next

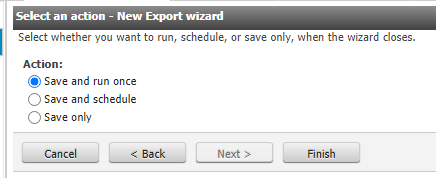

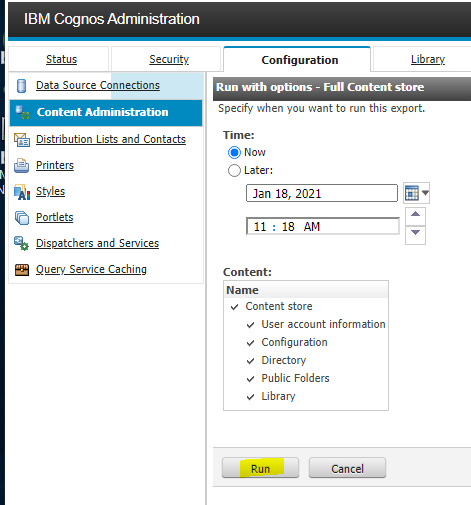

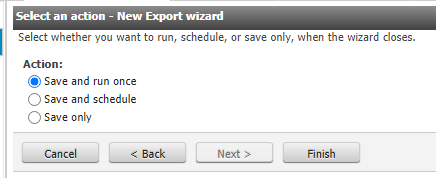

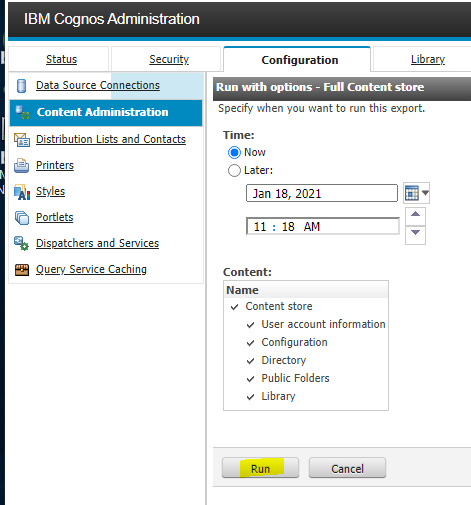

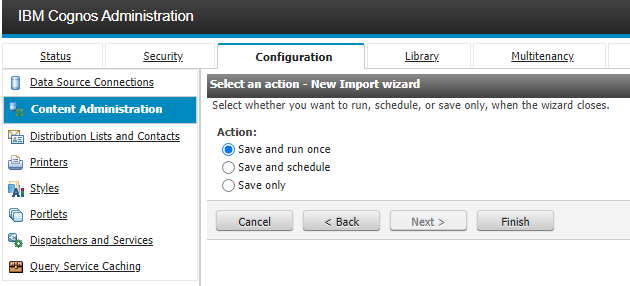

Select “save and run once” and Click Finish

Click Run

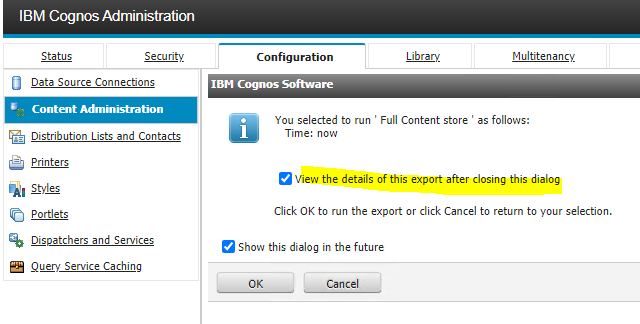

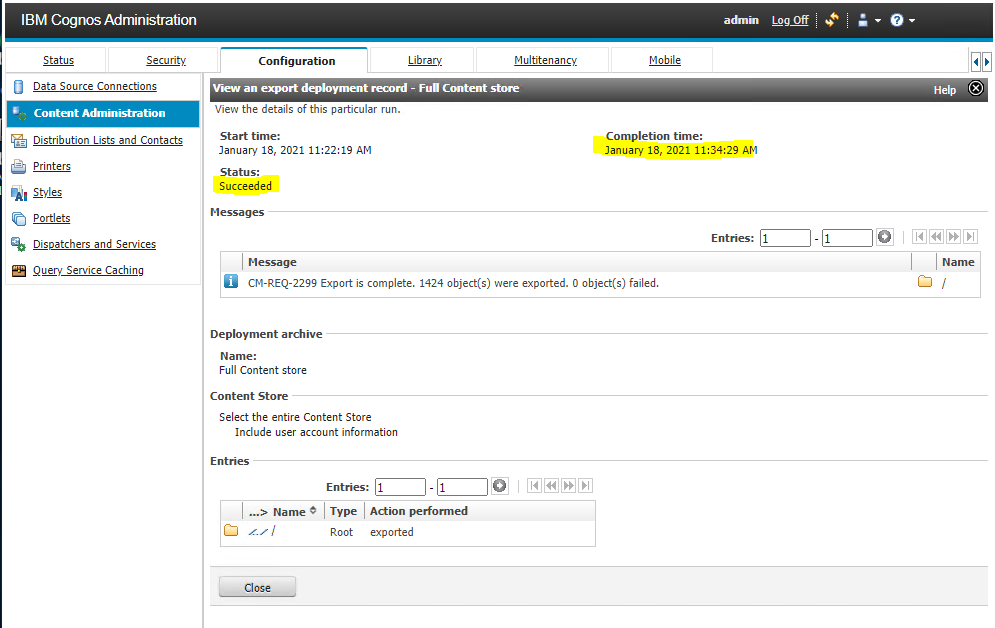

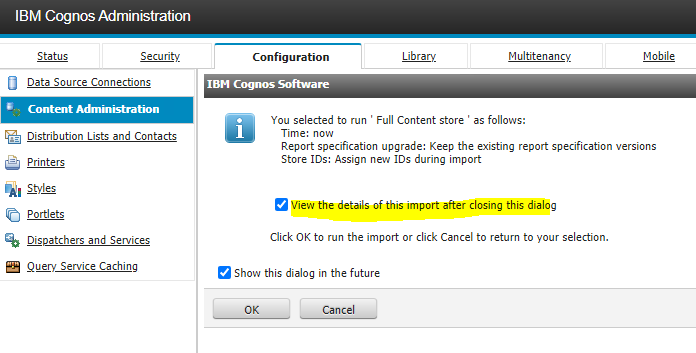

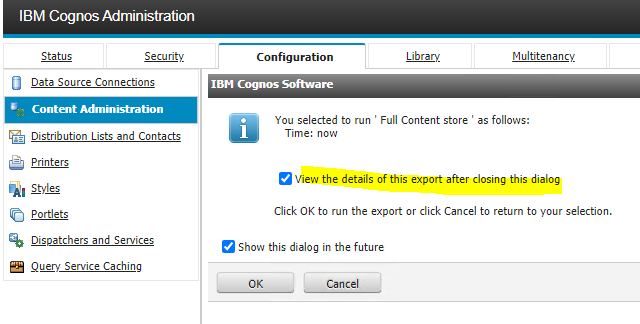

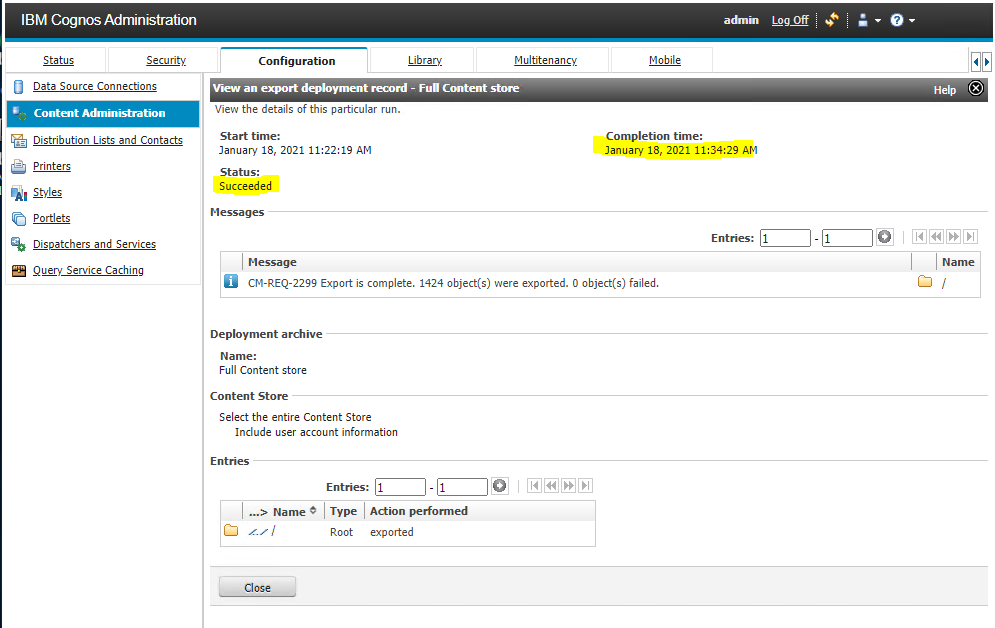

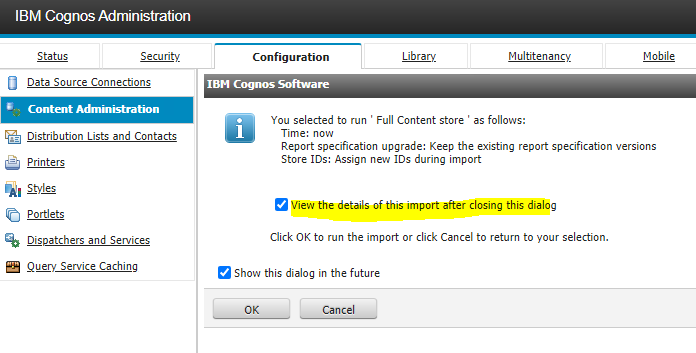

Mark “View the details of this export after closing this dialog” and click OK.

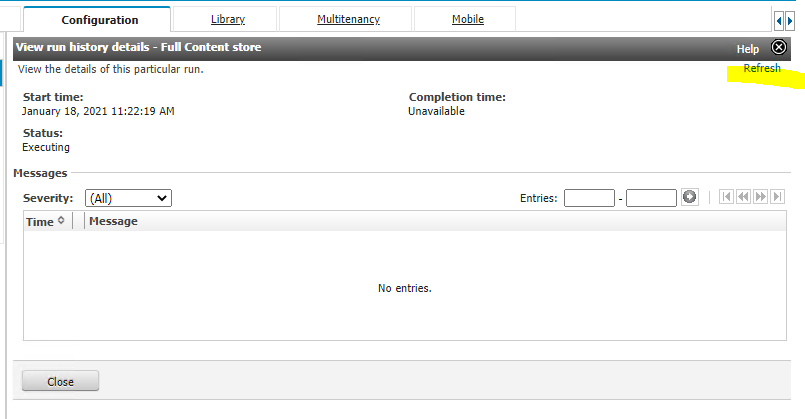

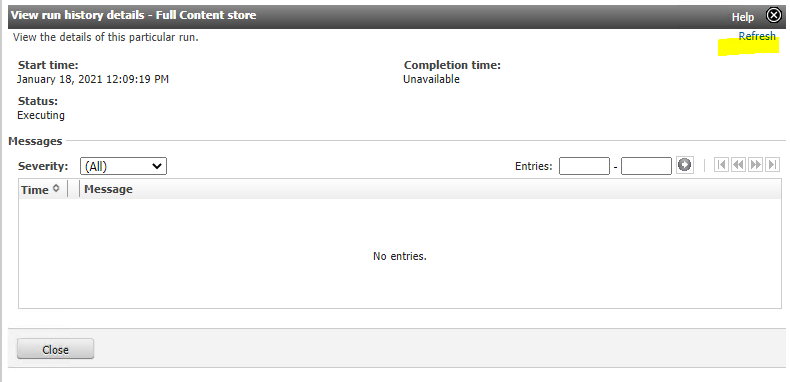

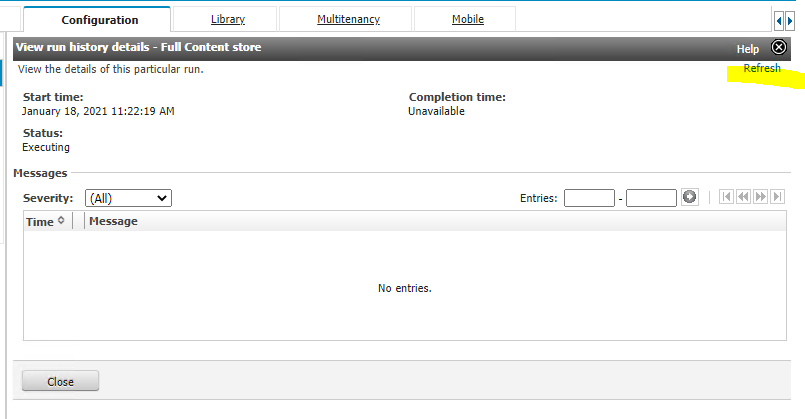

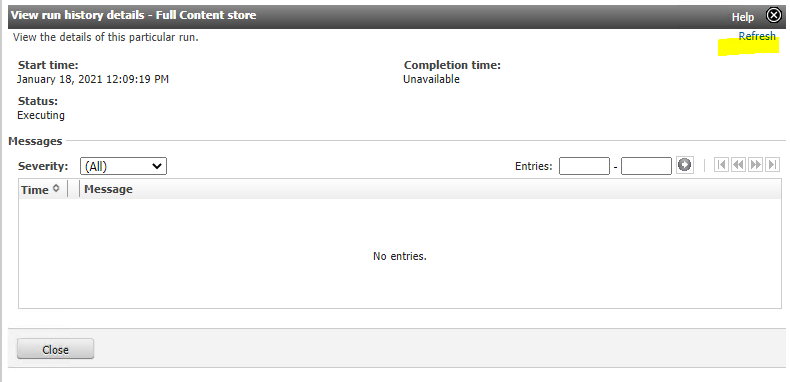

Click on blue “refresh” every 10 min to see if it is finished.

Wait until status says Finish. Above is not a finish status, there is no Completion time.

This can take 30 minutes, depending of the amount of data in your Content Store.

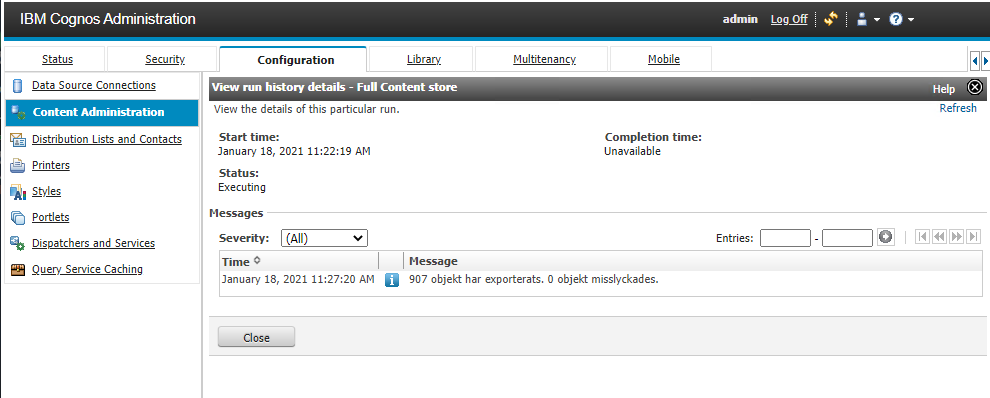

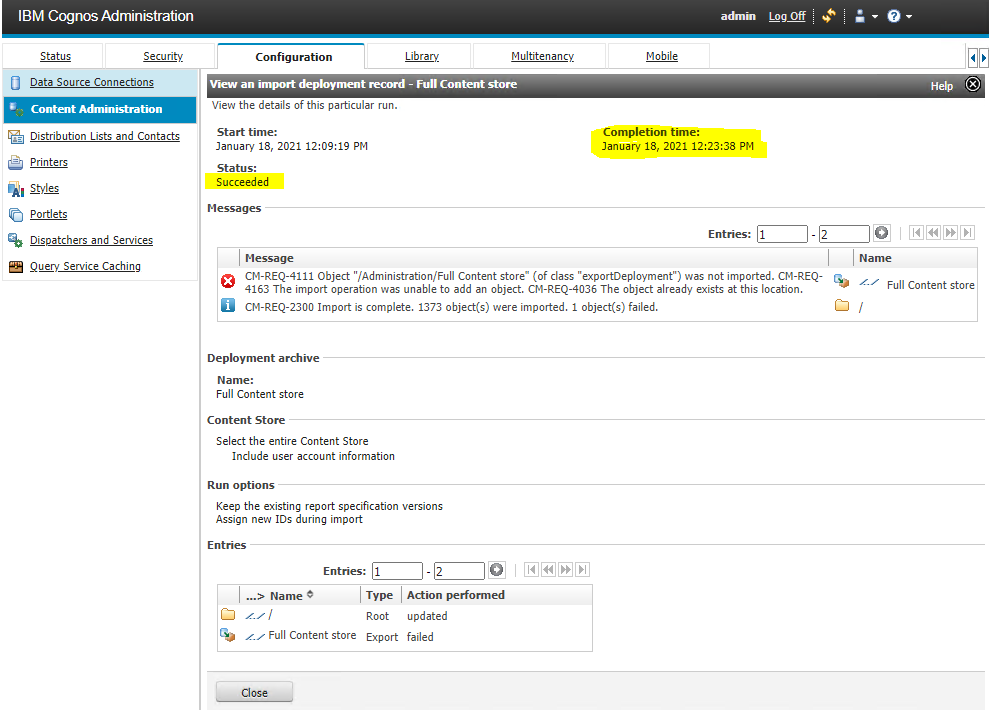

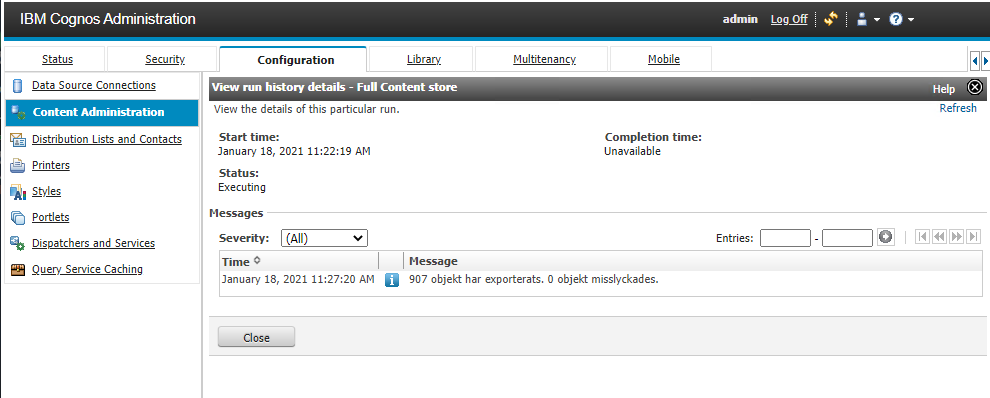

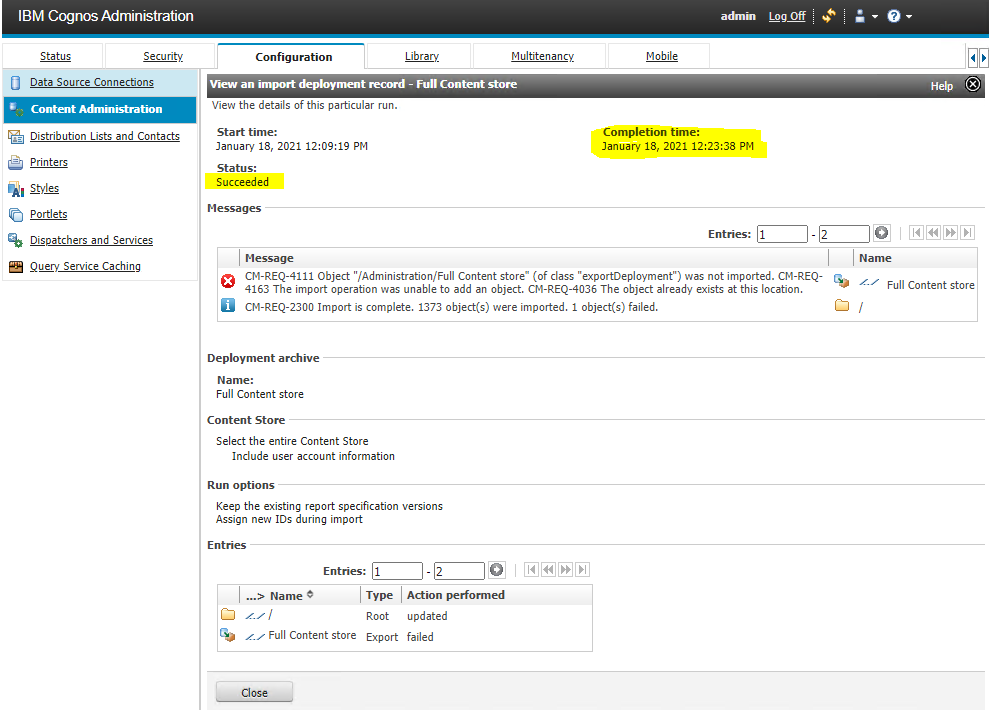

When succeeded, click Close.

When done go to Windows file explorer and copy the zip file over from the old Cognos BI server to your new Cognos Analytics server. Place the file in the deployment folder you are going to use.

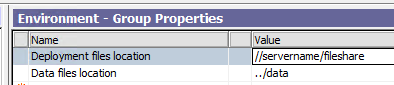

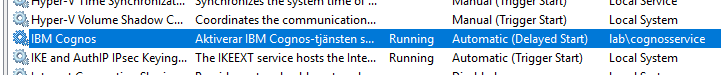

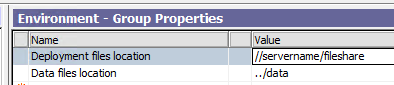

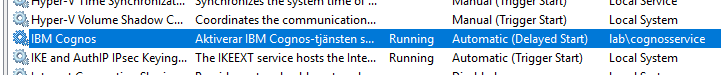

If the deployment folder inside Cognos Configuration is pointing to a file share: \\servername\sharefolder then the Cognos Analytics service must be run under a windows service account and not local system. Local system can only access folders on the same server.

Import content store by loading the deployment file via cognos connection.

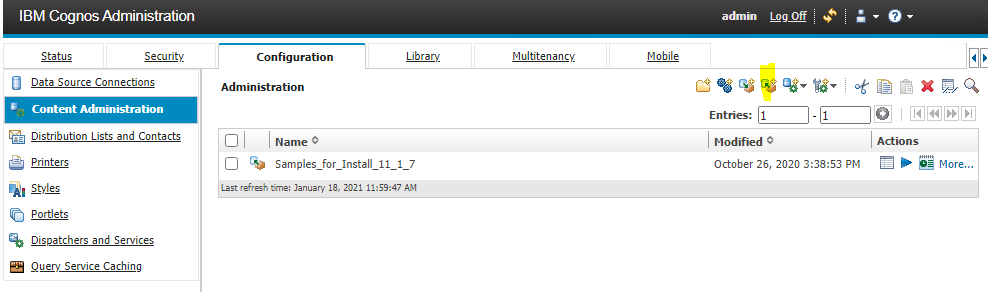

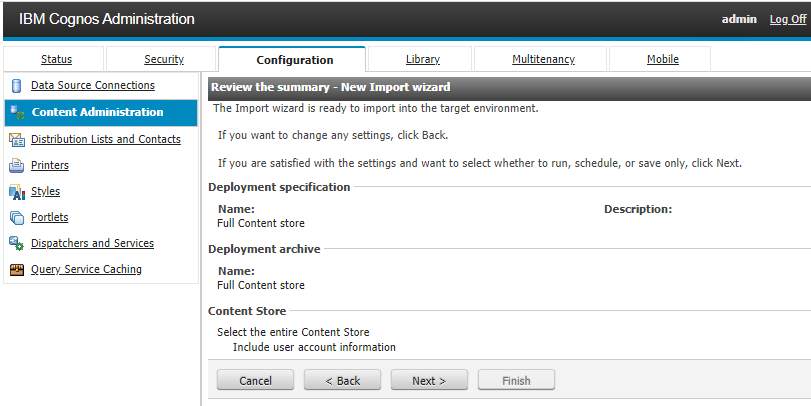

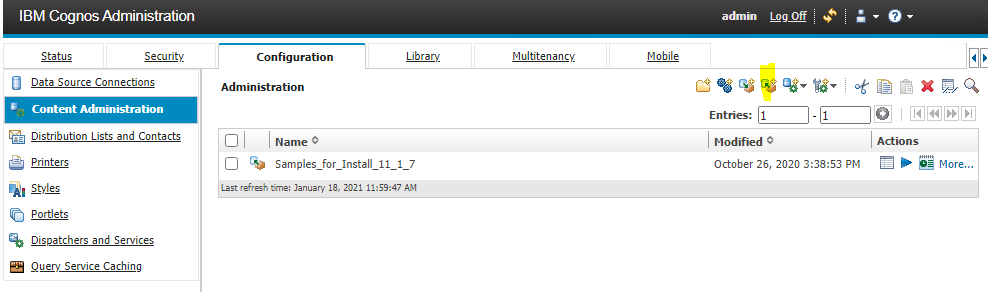

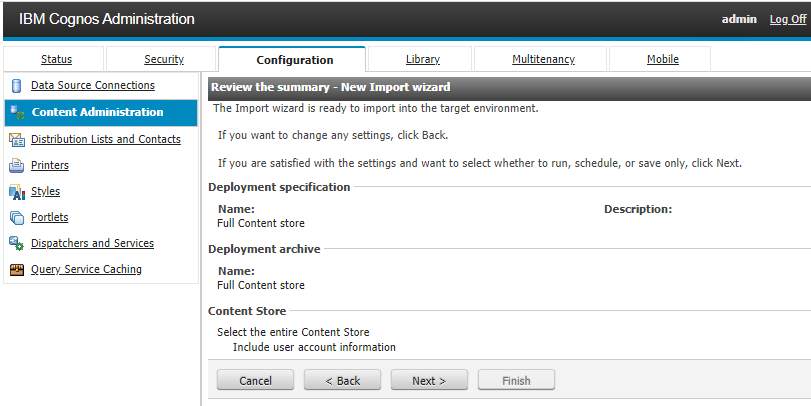

Login to new IBMCOGNOS and go to Administration page, click on configuration – Content Administration. Click on the import icon.

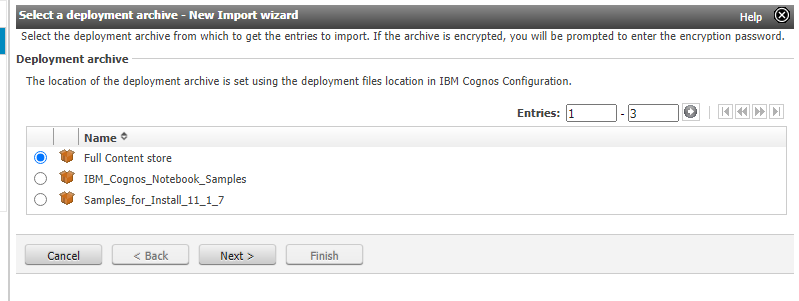

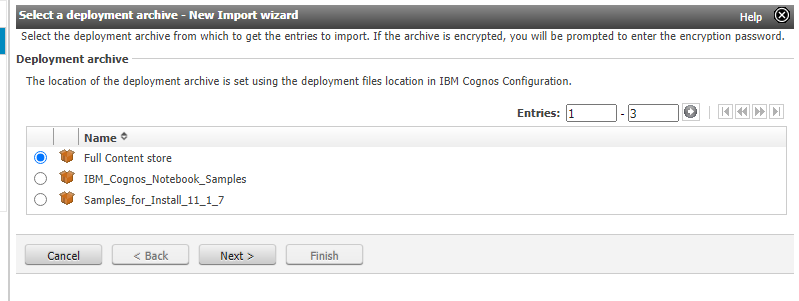

Select you full content store file and click Next

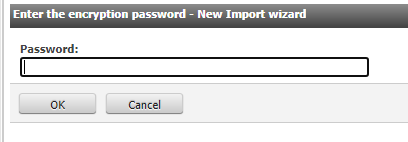

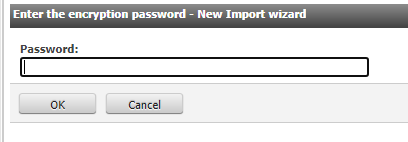

Enter your password. Click OK

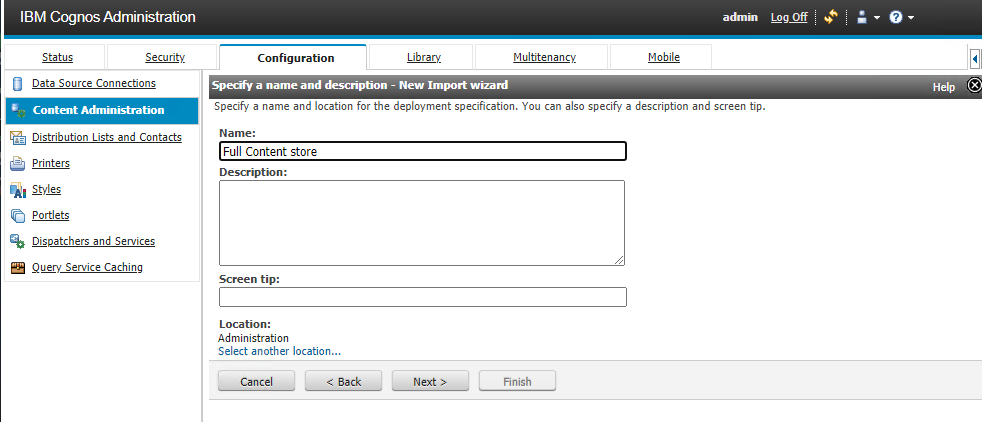

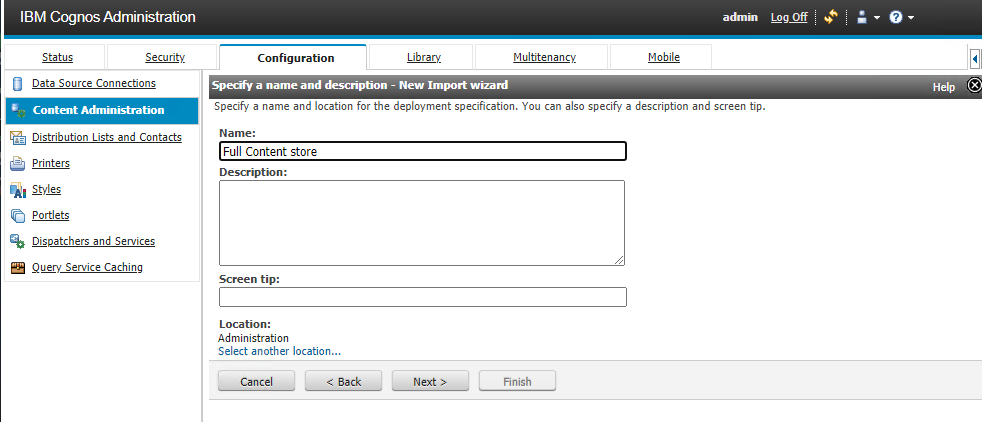

Click Next

Click Next

Click Next

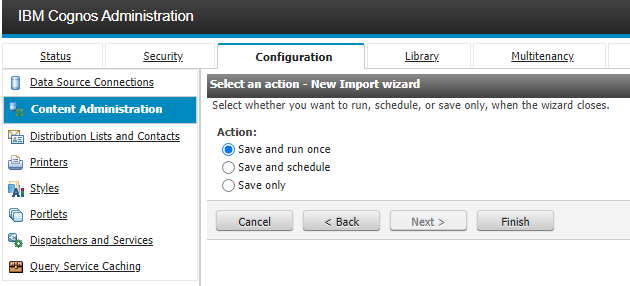

Select “save and run once” and click Finish.

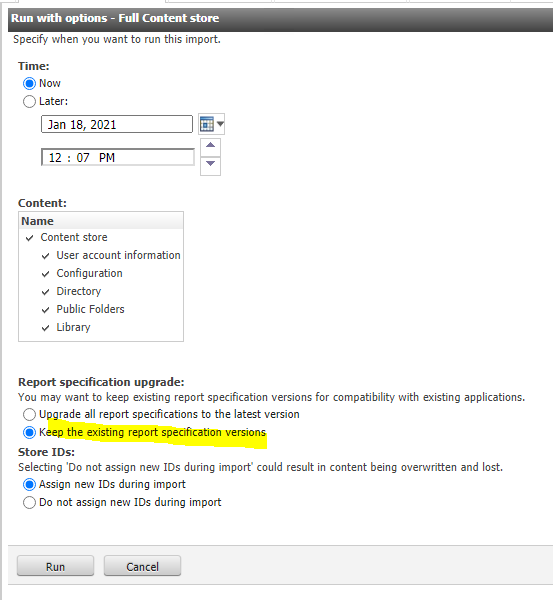

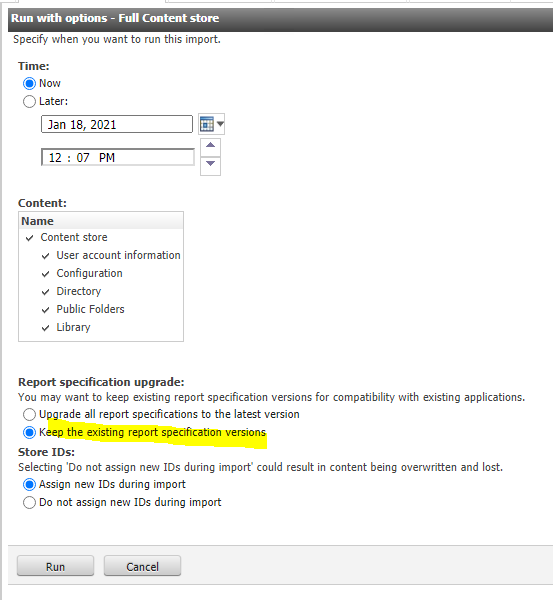

Do not run upgrade of report specifications. Do that at a later time, as it can take a very long time.

Click Run.

Mark “View the details of this export after closing this dialog” and click OK

Click Refresh every 15 min to see if it is done. When you have a completion time it is finish.

You can see errors in the report, note them down and search in google for more information.

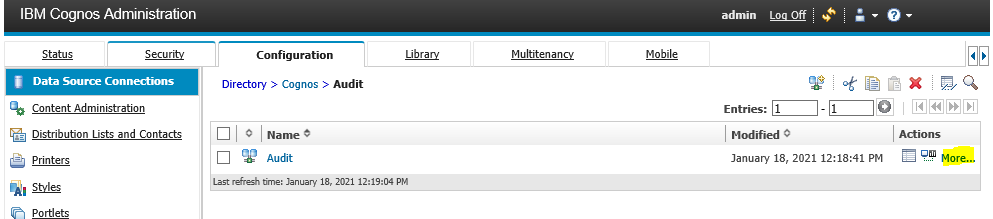

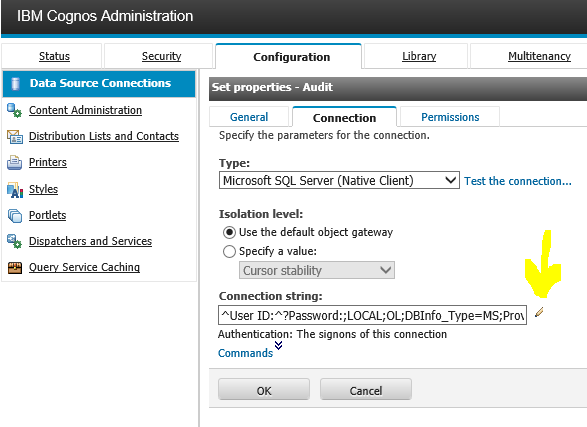

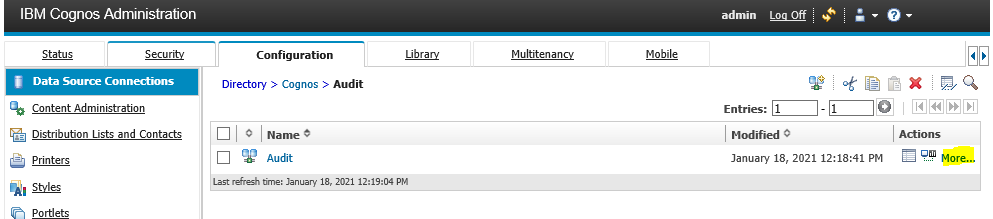

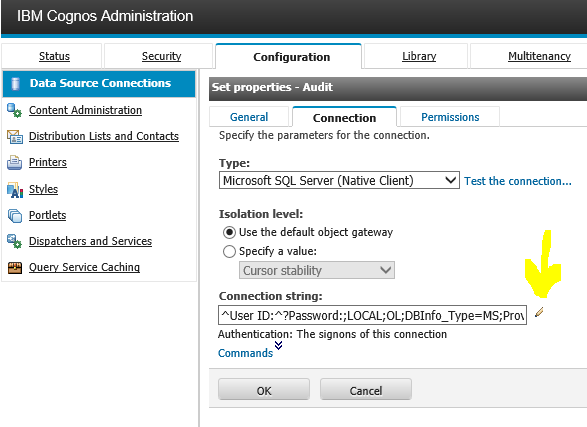

If you have changed also the database server host for your AUDIT database, then you need to go into Cognos Administration – Configuration – Data source connections. There you need to update the link to the new database server there for your audit data source.

Click on Audit, then on “more” to right of the test icon.

Click “Set properties”

Click “Connection” tab

Click pencil icon, to get to the data source update dialog.

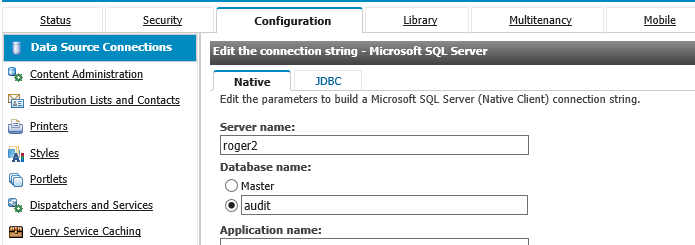

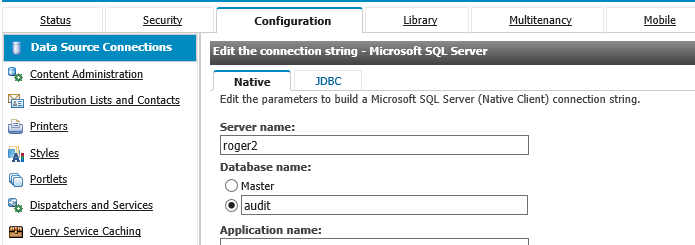

Change the server name and any other values you need to change. Update also the JDBC tab.

Click OK when done.

Test your data source connection.

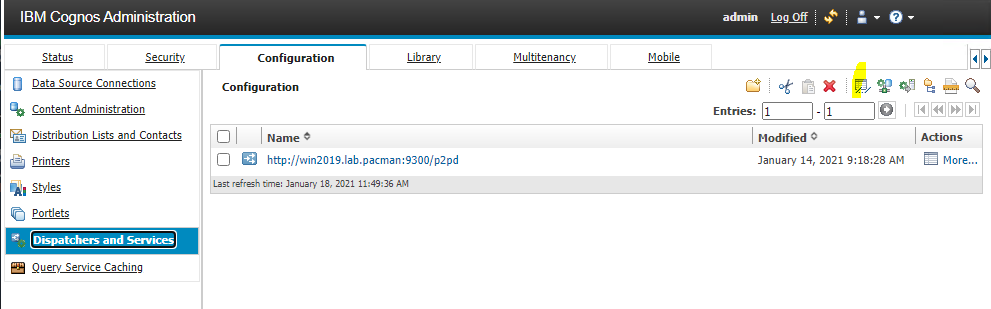

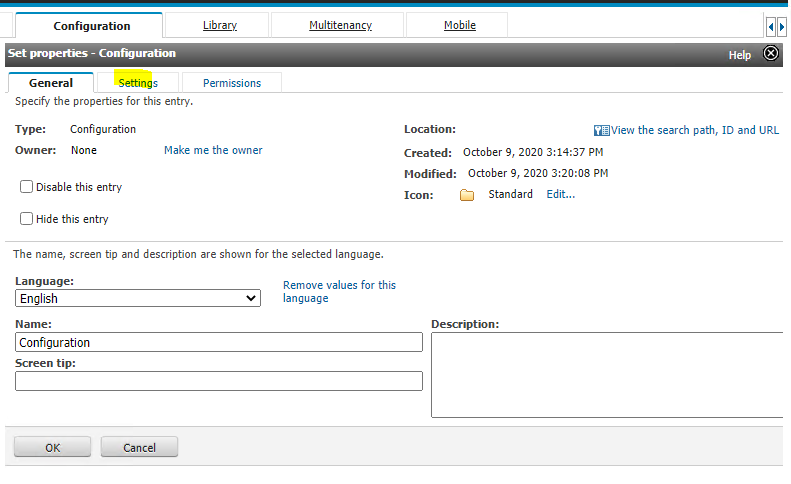

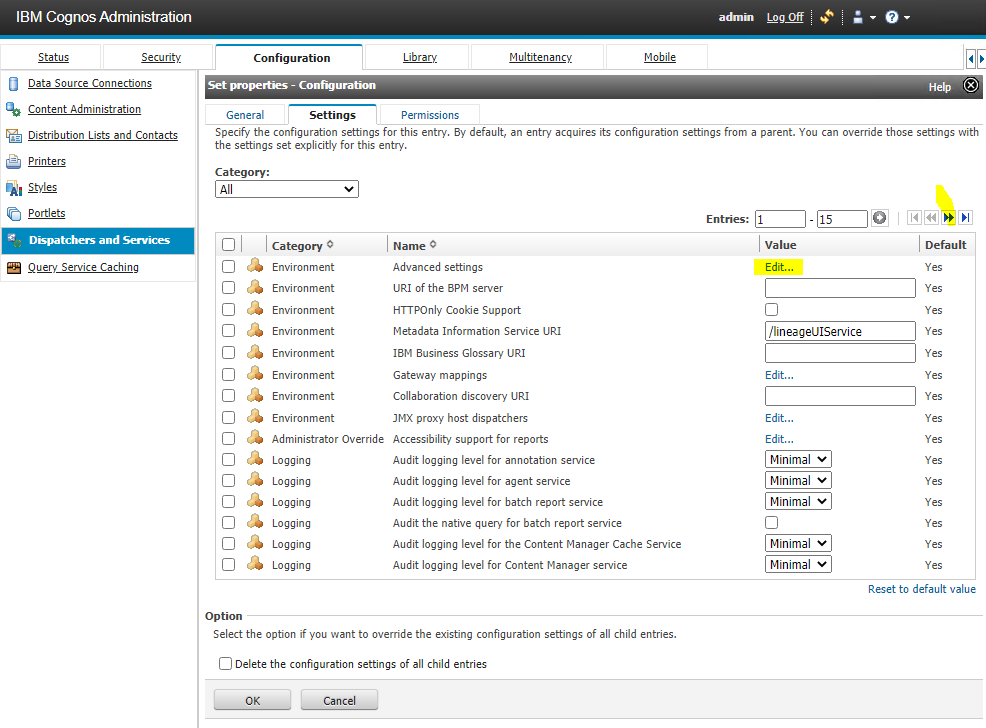

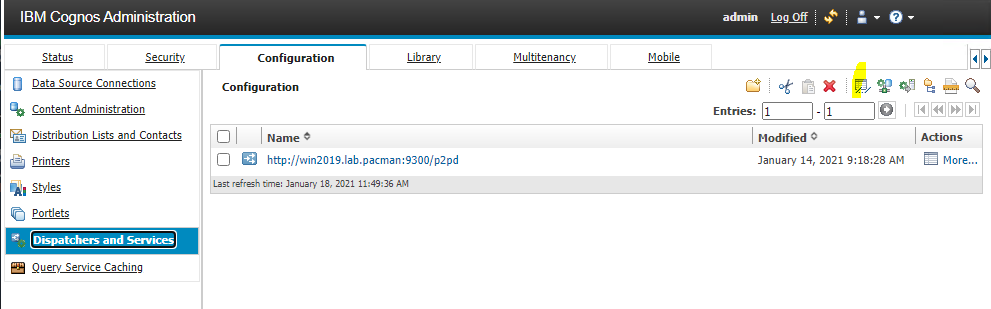

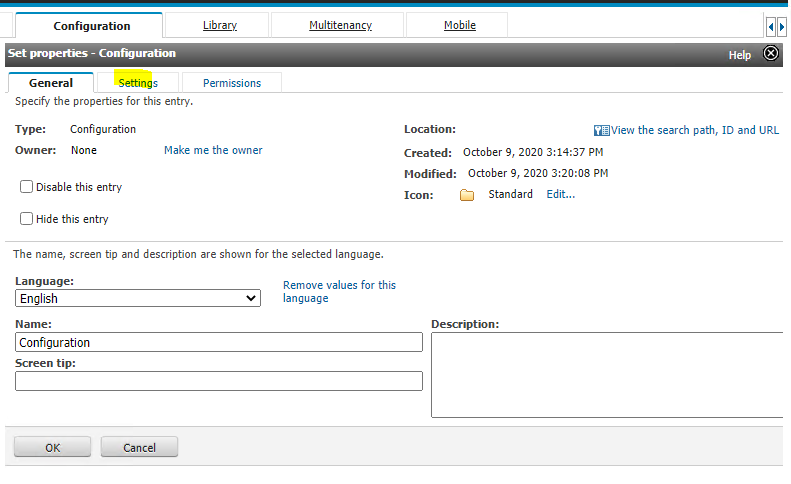

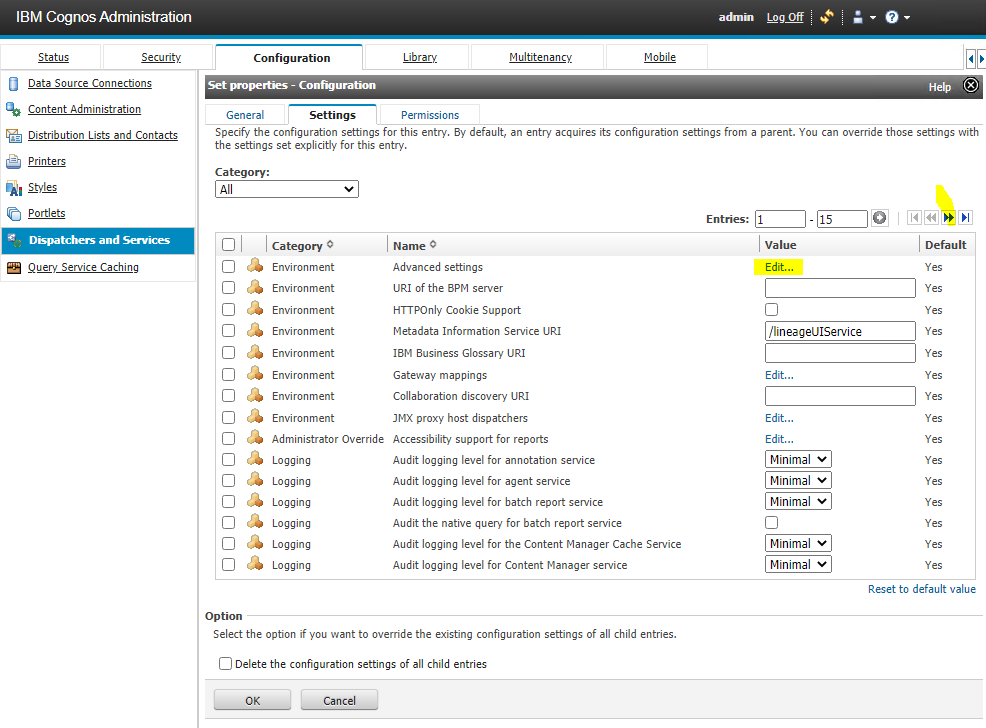

Any special configuration you have done on the Cognos Dispatcher is not part of the deployment, they you have to manually add again. Go to Cognos Administration – Configuration. Click on Dispatchers and service and click on properties icon.

Click on settings

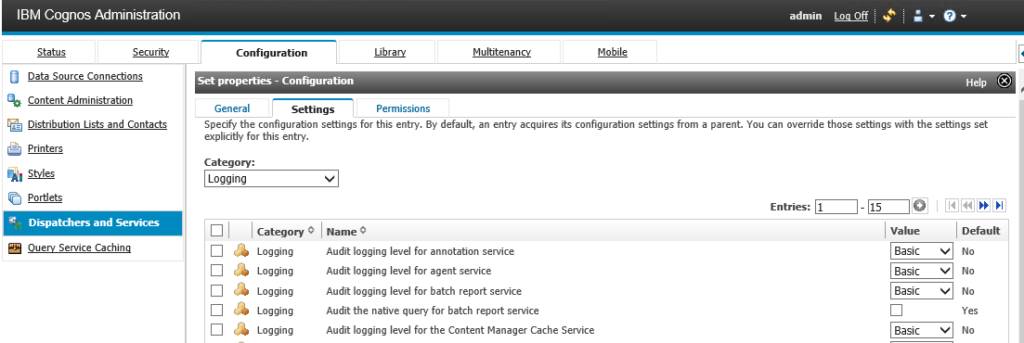

Check on all pages for values that is not default=Yes, as they have been changed and may need to be inserted in the new environment. Only enter values that you know you need in the new environment.

Click on the Advance settings blue Edit link, to see if there are any special settings in the environment.

Configuring advanced settings for specific services (ibm.com)

Repeat above steps in the new CA11 environment to get the fine tuning you want.

Logging should in most cases be set to BASIC.

Content Manager service advanced settings (ibm.com)

You can also import content store, by backup/restore the full cm database, but then you need to consider other parts like old dispatchers that will follow the move.

More information:

http://mail.heritagebrands.com.au/ibmcognos/documentation/en/ug_cra_10_2/c_deploying_the_entire_content_store.html

https://www.ibm.com/support/pages/what-difference-between-exporting-content-store-cognos-connection-and-doing-database-backup-content-store

https://www.ibm.com/support/pages/how-copy-entire-content-store-another-cognos-analytics-server-same-version

https://www.bspsoftware.com/products/metamanager/Download

https://www.ibm.com/support/knowledgecenter/SSEP7J_11.1.0/com.ibm.swg.ba.cognos.ug_cra.doc/c_deploying_the_entire_content_store.html

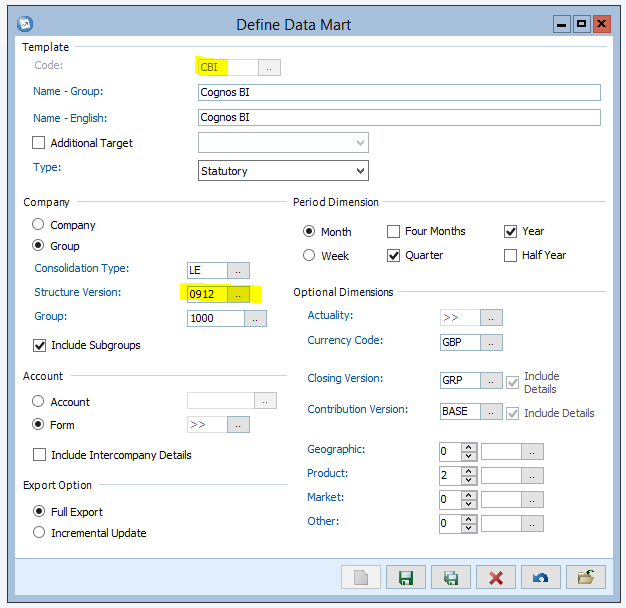

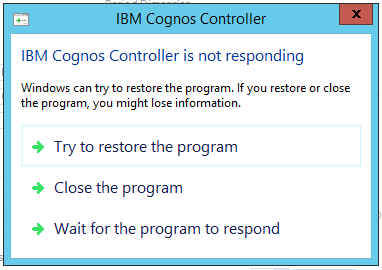

You need to restart the Cognos Controller windows server to release the process.

You need to restart the Cognos Controller windows server to release the process. Kill the controller client program will not help. You get same issue when you go back inside Define Data Mart dialog.

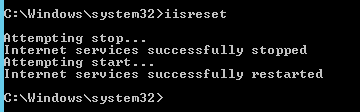

Kill the controller client program will not help. You get same issue when you go back inside Define Data Mart dialog. A restart of IIS looks like it release the process, and you can work again in Cognos Controller.

A restart of IIS looks like it release the process, and you can work again in Cognos Controller.